Vendor Performance Drift Detector

Mid-Size Manufacturer · Procurement & Vendor Management

PROBLEM

With the vendor document pipeline in place, a secondary problem came into focus: the same manufacturer had years of purchase order and receiving data sitting in their ERP — promised dates, actual receipt dates, line-item history — and none of it had ever been used to evaluate whether their suppliers were actually performing. Lead time commitments were recorded at the PO level but never reconciled against actual receipt dates at scale — meaning a vendor could slip from reliable to chronically late across dozens of transactions before anyone noticed the pattern. By the time a problem vendor was identified, the damage was already done: stockouts absorbed, customer commitments missed, and expedited freight costs incurred. With procurement staff focused on day-to-day transaction work, longitudinal vendor analysis simply didn’t happen.

OBSTACLES

- Promised ship dates existed in the ERP at the PO level but were never systematically compared against actual receipt dates — on-time performance defined as delivery within ±2 days of the vendor’s committed ship date.

- No scoring methodology existed — vendor evaluation was anecdotal, driven by whoever raised a complaint loudest rather than by data.

- Gradual performance drift was effectively invisible: a vendor slipping from 95% on-time to 70% over three months would not trigger any alert under the existing process.

- With 400+ vendors, manual review of performance trends was not a realistic weekly task for any team member.

- Supplementary signals — late confirmation emails, increased backorder notices, slower response times — existed in the inbox but were never aggregated or connected to procurement decisions.

OUTCOME

The agent pulls weekly from ERP purchase order and receiving history, reconciling promised ship dates against actual receipt dates at the line-item level for every active vendor. Each vendor receives a rolling weighted composite score incorporating on-time delivery rate, average days late, late delivery count over the trailing 30 and 90 days, and trend direction. Supplementary signals from vendor email history — confirmation response latency and backorder frequency — are incorporated as secondary inputs where consistently structured signals are available.

Results are written weekly to a maintained Google Sheet vendor scorecard. When a vendor’s score crosses a defined threshold — on-time rate dropping below 80%, three or more late deliveries in a rolling 30-day window, or a sustained downward trend — a Microsoft Teams alert fires to the procurement lead with the vendor name, current score, trend direction, and a direct link to the scorecard row. Thresholds are configurable per vendor category or criticality, and score weights are refined over time based on procurement team feedback.

The system does not make vendor relationship decisions. It surfaces deterioration early enough for a human to intervene.

RESULTS

- Estimated 85% of underperforming vendors flagged before a resulting stockout or missed customer shipment, compared to near-zero proactive identification under the manual process.

- Manual vendor review and reporting time eliminated — previously an ad hoc process consuming 4–6 hours per week across the procurement team.

- In observed cases, late delivery surprises from watch-listed vendors reduced by approximately 65% within the first 90 days of scoring adoption.

- Procurement team shifted from reactive vendor management to a weekly review cadence driven by ranked exception lists rather than inbox triage.

Creating a vendor feedback platform to enable vendors and suppliers to exchange feedback and gain insights into working relationships for Abbott.

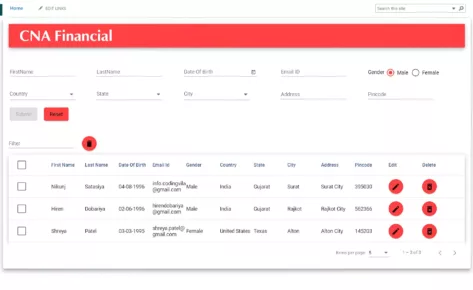

Empowering the US Chamber of Commerce with a versatile white label portal, an intuitive financial dashboard, and a custom API for seamless data integration.

Developing a lightning-fast, fuzzy search solution for a Sharepoint-integrated Angular JS app.

Empowering an Industry Leader with Email Migration and Security Enhancement

Enhancing customer support for an online charity auction platform with integrated CRM, sentiment analysis, and streamlined communication channels.

Creating a bespoke, personalized website for a leading weight loss clinic, emphasizing individualized care and secure communication of sensitive medical information.

Building a first of it’s kind procurement feedback platform for Diversity Suppliers / Buyers.

Overhaul of search and tagging structure using NLP, translated into 4 languages

Developing a custom dashboard with seamless AI integrations and API connections to empower SMB owners with enhanced performance visibility and streamlined data management.

Empowering Hoodle with strategic marketing support to implement ClickFunnels effectively and offering design mockups to breathe new life into their website

Early development of the website increased daily traffic to 180,000 users.

The Italian Trade Agency works alongside Italian companies to ensure them the greatest success on international markets and to encourage foreign companies to look to Italy as a reliable global partner.

Empowering a law firm through a website overhaul, Google Analytics strategy and implementation, and targeted PPC campaigns.

Silgan Closures manufacture Plastic bottle caps.